Businesses are already using 'Emotion AI', a type of artificial intelligence, to cash in on our emotions. Our children could be next, experts warn, as toymakers begin to exploit this new technology. What are the pros and cons of AI-enhanced toys?

‘Emotion AI’ aims to give a computer the ability to interpret and respond to our emotions by analysing our language, gestures and facial expressions. Analysts are predicting that the technology will generate a market worth $52bn (£41bn) by 2026.

While it has many applications, from predicting whether a colleague is responding well to feedback, to assessing how consumers react to advertising, one that could prove controversial is in children’s toys.

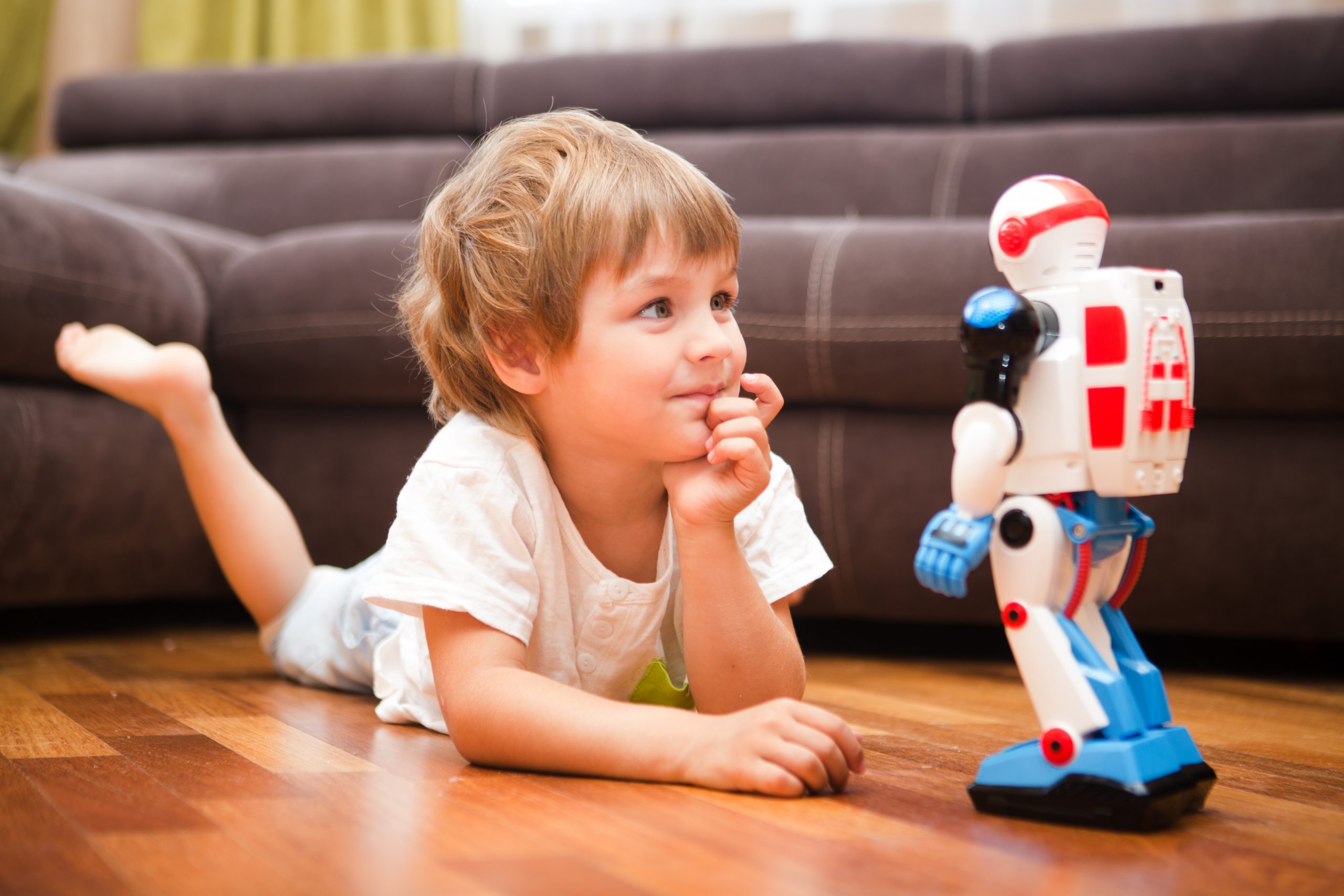

Emotion AI could be used to create even stronger bonds between children and their dolls, teddies and other toys. We already have a stormtrooper doll with facial recognition built in, and interactive robots that learn their owner’s name and behaviour. Just think how effective basic Tamagotchi digital pets were back in the late Nineties at making their child owners feel obliged to ‘feed’ them.

Add AI into the mix and the emotional pull could be that much greater. This raises many questions.

Could human-toy relationships become so realistic our children can’t tell the difference? Will that make it more difficult for them to form human relationships? What if the Emotion AI alogrithms contain biases around race and gender? Could smart toys actually become a bad influence?

“This is going to be very significant in terms of child-oriented interaction,” says Andrew McStay, Professor of Digital Life at the University of Bangor and co-author of a report into Emotional Artificial Intelligence in children’s toys.

“We’re in a transitional stage, but speech analytics and natural language processing is progressing at speed.”

‘Increasing intimacy’

Gilad Rosner is the founder of the Internet of Things Privacy Forum and a member of the Cabinet Office Privacy and Consumer Advisory Group. He says that what we are seeing currently is only the beginning of placing AI in children’s toys, and predicts that we will soon see toys with a richer emotional capability, based on the facial recognition technology that is already becoming common.

“What we are seeing at this stage are some toys that can do some degree of recognition, but there will be more of it. And it’s a very short hop away to looking at faces for contextual information, rather than just trying to make sure it’s the same child who’s talking,” he explains.

Prof McStay believes this will be blended into toys with synthetic personalities, which could be very engaging to children, prompting “increasing intimacy with AI toys and related services, derived from enhanced child-agent interaction”.

Pros and cons

Should we be worried? Prof McStay says that when he engaged in a study on the potential effects of AI in toys, the parents he surveyed were broadly positive about the possible benefits.

“While they had many misgivings, they were uniformly keen that their children were able to build, engage and interact with the systems, such as through basic level programming in apps,” he says.

And Mr Rosner at IOT Privacy Forum says that there may be particular benefits for autistic children, who often find it difficult to read human facial expressions. They might be able to engage with human emotions “in deeper, richer and better ways”, he suggests.

But both experts had concerns around the potential misuse of data generated by these types of toys, as well as the potential for reinforcing stereotypes.

“Imagine, you have a television with a camera in it that watches you, and it watches you and your family for five years, seeing how you react to different things. That is a stockpiling of very sensitive information,” says Mr Rosner.

AI-enhanced toys could do much the same, he suggests.

Credits: Shutterstock

“These are children, and they deserve their protected class, they deserve special protections.”

Prof McStay says parents should be wary of toys with synthetic personalities and monitor them closely.

“What subtle messages is [the toy] giving out?” he asks. “Gender stereotypes, such as girls, passive, boys active, for example?”

Child’s play for hackers?

Online safety experts also urge parents to be cautious.

“If the toy were to be hacked what are the implications of that?” asks Nicholas Ineson, a former Cyber and Electromagnetic Activities (CEMA) specialist with the British Army who is now senior Penetration Tester with CyberCrowd.

“A hacker could quite easily communicate with a child and the child would be unable to differentiate conversations with the toy and the hacker. Anything could be possible.”

A chilling thought.

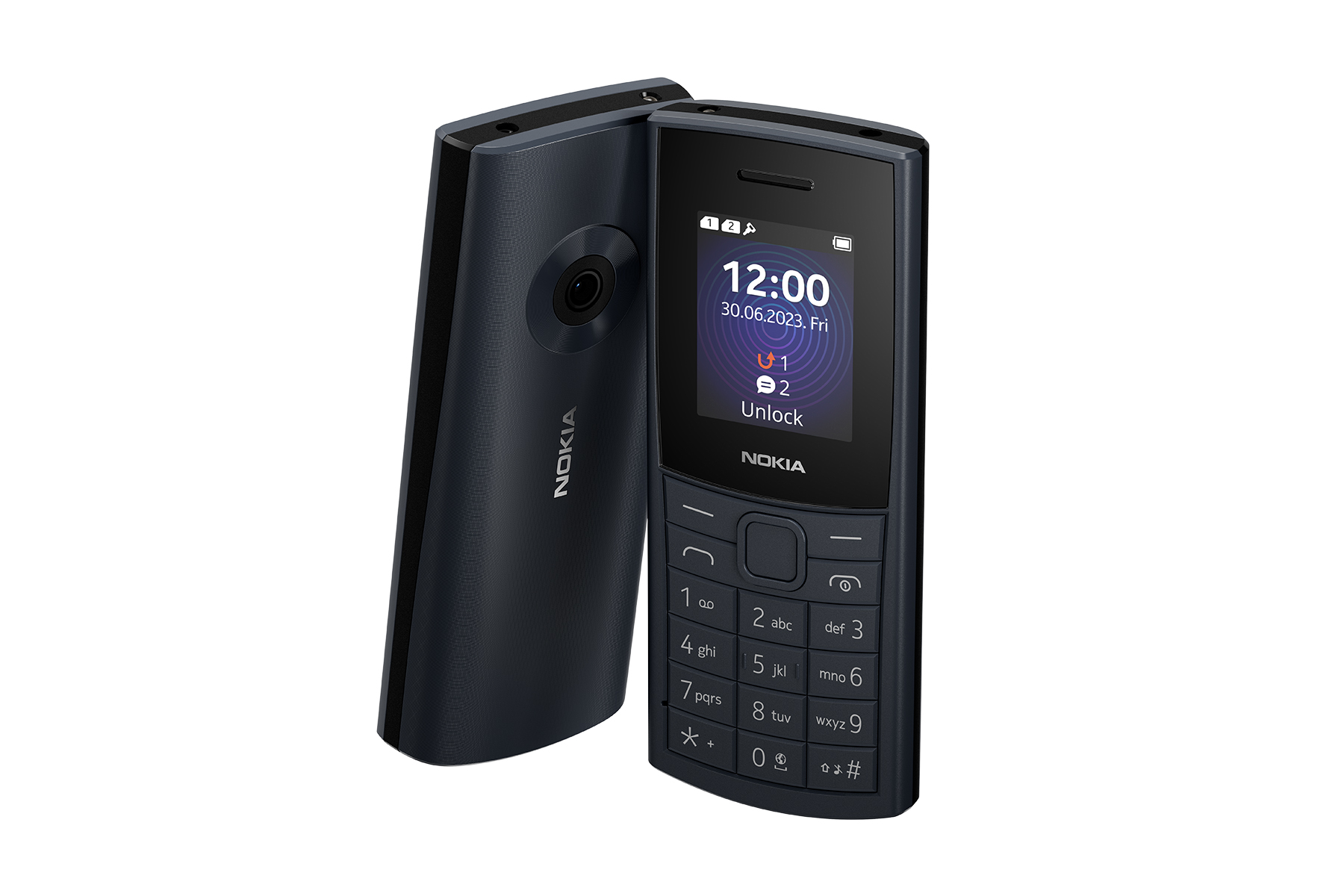

Mr Ineson suggests that in households with connected toys, particularly with AI capabilities, parents should take basic safety steps including:

- limiting time on the devices

- monitoring your child’s experience with the toy

- ensuring all software patches are updated

- talking to your child about internet safety.

Christopher Bluvshtein, online safety expert from VPNOverview, adds that parents should also ensure that any smart toys using Bluetooth or WiFi have some kind of authentication for first-time set-up as a minimum.

“Ensure that your home WiFi network has strong security. By infiltrating your WiFi network, it’s only a short hop for a malicious third party to access devices connected to it or snoop,” he adds.

‘Duty of care’

Mr Rosner points out that parents can avoid AI-connected toys if they want to, but may have less autonomy when it comes to other AI effects on their children, for example through education.

“In the case of toys, nobody’s forcing you to buy a toy. But in the case of education, you have limited freedoms. The child certainly does,” he says.

As AI plays a growing role in our children’s lives, parents should remain curious and alert to the possibilities and dangers, advises Prof McStay.

“Parents rightly want the benefits, but they’re also rightfully wary,” he says.

“As adults we have a clear duty of care to protect children and childhood, so any of use data about children and systems that judge and interact in new ways must be entered into with utmost care.

“This isn’t to say that new forms of entertainment, pleasure and education are off-limits, just that as technologists, educators, business leaders, policymakers and engaged citizens, we must get it right.”

![Portrait of school age boy sitting at kitchen table do not want to eat[Adobe Stock] stock photo of a young boy sitting at a kitchen table, refusing to eat the food in front of him](https://www.vodafone.co.uk/newscentre/app/uploads/2024/03/Portrait-of-school-age-boy-sitting-at-kitchen-table-do-not-want-to-eatAdobe-Stock.jpg)

![mother with daughter with smartphone in snowy weather [Adobe Stock] stock photo of a mother outside in snowy weather with her daughter while using a smartphone](https://www.vodafone.co.uk/newscentre/app/uploads/2024/02/mother-with-daughter-with-smartphone-in-snowy-weather-Adobe-Stock.jpg)